Controllable Latent Space of Cultural Heritage GANs

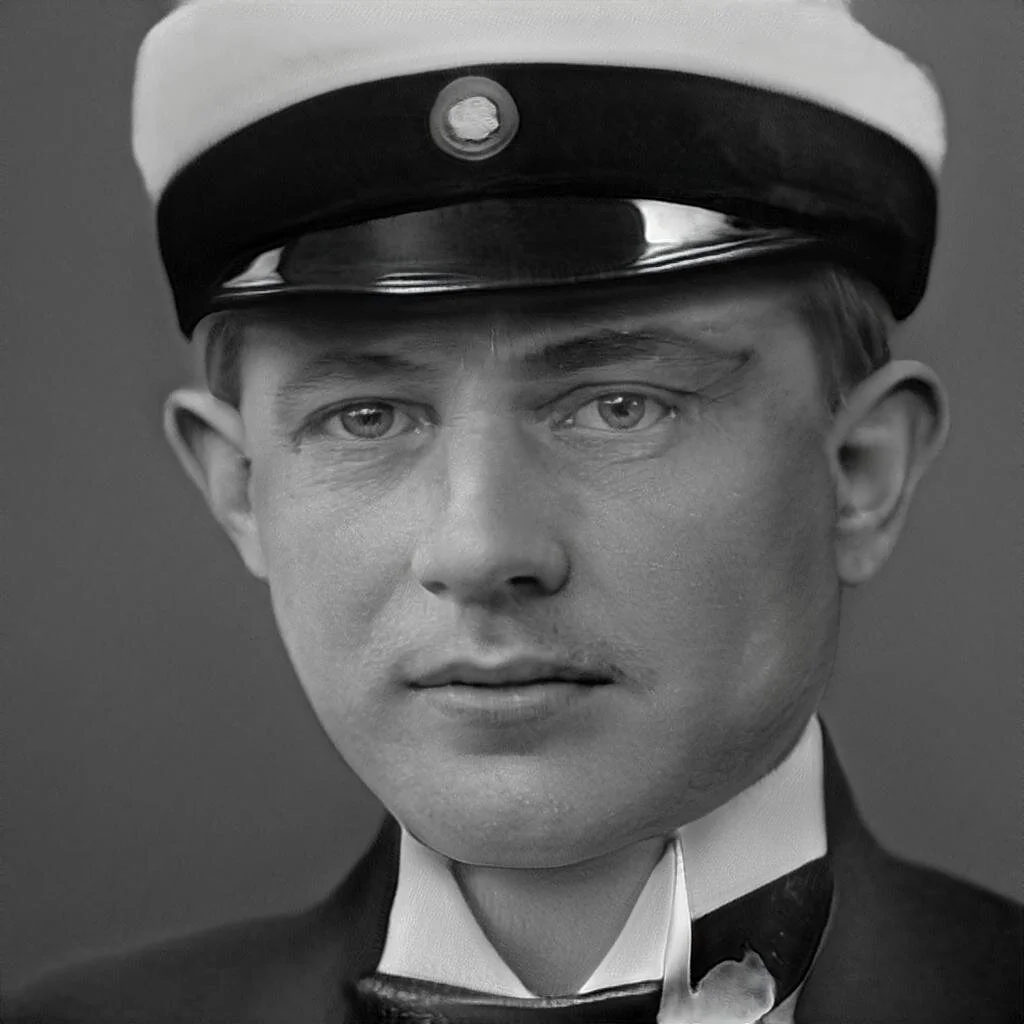

Images produced by training StyleGAN2-ADA on 19,372 portraits taken in Lund, Sweden. A few years ago I shared some preliminary results from running Generative Adversarial Networks on historic photo collections. The state of the art in GANs has advanced quite rapidly, with important discoveries of how to carefully grow the size of the networks and how to tease out semantic “styles” (male/female, blonde/brown-haired, profile/front-on) latent in the underlying datasets of tens of thousands of images. These innovations have combined to make GAN-generated images even more realistic (and larger in size) than most anyone thought possible. There has been a good set of questions raised about the problems of false images that are ever more photo-realistic, in outlets as mainstream as the New York Times.

Still, most discussions surround contemporary portraiture — the faces that are useful in the profile pictures of Twitter bots, or virtual assistants, or scam artists trawling dating websites. I’m more interested in the vast amount of digitized materials that museums and libraries have collected, cataloged preserved, digitized, and placed online. Can GANs learn the characteristics of these datasets, which differ substantially from modern digital photography?

The results above would suggest the answer is yes. Trained on a ground truth dataset of 19,000 faces of portraits taken in the Swedish city of Lund in the early 20th century, NVDIA’s new StyleGAN2-ADA network was able to produce these new faces that capture the distinctive black-and-white tonality of the originals, as well as some distinctive socio-cultural characteristics such as the studentmössa that graduating college students would have worn (second picture in the row above).

Though these pictures may look nearly indistinguishable from actual portraits taken in the early 20th century, they are in fact outputs from a network with near-infinite variability — random samples, if you will, from a large latent space of representation. One way to visualize this is to explore this latent space by traversing the points between portraits such as the ones above. This is a lot easier to show than to explain. In this morphing video, we are traversing the latent space bewteen the young girl (Seed 9) and the older balding man (Seed 8).

Real Fakes or just Memorized?

One of the questions that people ask of these generative networks is: how can we be sure the images they produce are truly “novel”? That is to say, is it possible the network is merely memorizing faces already present in the training data, and regurgitating them back where they obviously pass muster when compared with the ground truth? (We might think of this as a deep learning form of over-fitting in traditional statistics.)

I think the answer may be domain-dependent: surely the way we measure novelty in cars versus toasters versus human faces will differ. But for faces, we have the advantage of a number of deep learning networks which are used for the purposes of face identification. Although I’ve never used these networks to identify real humans (and would not do for any reason), I think it’s an acceptable use of the technology to measure how well generative networks perform. By measuring the ‘distance’ between a generated face, and all the faces present in the training material, we can see if we’re merely echoing back something we already had, or creating something novel.

Latent Representational Space

The other way to make these generative networks more interesting is to not just passively accept what they spit out, but rather involve ourselves more deeply in the act of generation. In the screencast below, you can see me adjusting sliders that control the latents, or vectors of possibility that control the output of the generative network.

It’s worth watching carefully as the changes occur. Some are obvious, such as a shift from youth to old age, or feminine to masculine appearance. Others are more subtle, such as a shift from lighting the right versus the left side of the face. And there’s one obvious “studentmössa” vector: the appearance at 0:30 of that white hat which college students wear when they graduate from college in Sweden. You can imagine the ways companies discover, document, and instrumentalize such specific representational vectors for use in commercial products based on AI. (Or at least, they way they did before diffusion models overtook generative adversarial models circa 2022.)

My interests are not in exploiting these vectors for such specific purposes, but rather treating them as a whole to explore what they capture about what this particular photo studio in Sweden was capable of producing. In the service of producing entirely hypothetical and hallucinated images, this GAN also creates a dynamic, controllable model of the expressive possibilities of this particular photographic studio over a century ago.